Google announced today that it would be revamping its image search functionality. First, it is going to make image search capabilities even more robust. It will add new features, including Google Lens and AI integration into Google image search.

With each passing year, Google has been able to improve its image-identifying algorithm. Instead of extracting a written image description, Google can identify images just by examining the pixels.

Must Read: Top 63 tech innovations in history

Google’s overall goal is to enhance the visual search capability. The company wants people to get more accurate visible information results and assist people in work that involves a lot of visual stimuli.

Therefore it is increasing the visual content results it provides, including videos and images. Other examples include: visual recipes, sports clips, and illustrated weather reports.

It wants to go above and beyond this. So, the Silicon Valley giant has come up with four concepts.

AMP Stories

The first major update is the development of AMP stories using Artificial Intelligence (AI). So, for example, if a user searches for a celebrity or a famous person in Google Search, they will be shown AMP stories about the person.

AMP Stories will be a brief biography of the celebrity, including their biodata, career highlights, and a brief glimpse into their personal life, including their marriage, etc.

This biography wasn’t written by any human or someone working at Google but rather curated by AI using sophisticated learning algos.

Featured Videos in Google Search

Videos can be a quick and flexible way to research a topic. Users can dive deep into a video and extract all the relevant information. But the videos must be related to a user’s search query. Otherwise, it will be useless.

With the help of a computer vision, Google can now comprehend, analyze and deeply understand the most helpful information and present the most relevant videos in a new option called Featured Videos.

For example, if a person plans a trip to New York and searches for places to visit in New York. The latest algorithm will be able to understand this command and present videos on famous tourist destinations. Hence a person will be given results at Madison Square Garden, Central Park, and Broadway.

Also Read: Google’s news feed ‘Discover’ will now be available on phone browsers.

Websites with better content will be given a priority

Google images will now produce results of images that have an excellent webpage backing them. This means that websites that are updated frequently will be given priority. Google will also prioritize sites where the image is “central to the page, and higher up on the page.”

So if a person is looking to buy a specific pair of jeans, a product page dedicated to that pair will be ranked higher than a product category page for jeans.

Google will also show the title of the image page as a caption to make searching easier. It will also give suggestions on related images.

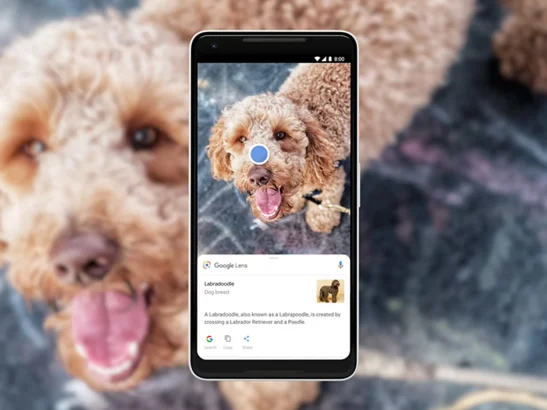

Google Lens will help you explore images within images

Lens’ AI technology examines images and explores sub-images that could be interesting. If you select one of these sub-images, Lens will produce other relevant images. Many of these sub-images will have product pages where you can buy them or search for other related images more extensively.

Lens will also let users “draw” on the images to create unique sub-images they can further search.

Do let us know what you think in the comments section below!

Share Your Thoughts