At some point in the last two decades, every person with an internet connection has been asked the same deeply strange question: prove you are not a robot. You squinted at warped letters, clicked fire hydrants, listened to garbled audio, and wondered why a website needed confirmation of your own humanity. The question used to feel trivial. Now, as AI systems routinely outscore humans on vision benchmarks and language tests, it has quietly become one of the harder problems in computer science.

The CAPTCHA story is really about an arms race between detection and evasion, and right now the bots are winning. Here is how we got here.

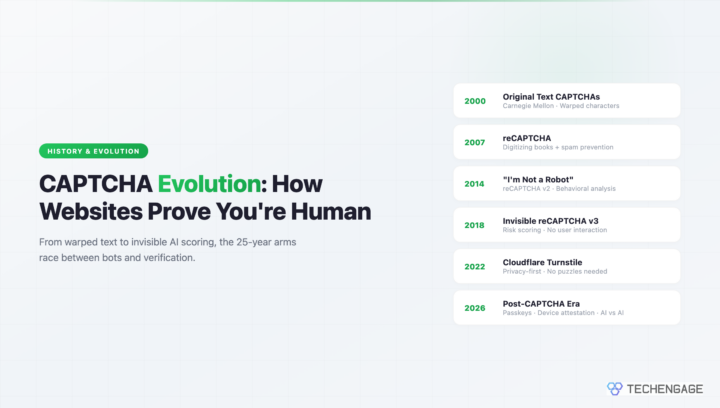

Carnegie Mellon’s Original CAPTCHA (2000)

The word CAPTCHA was coined in 2000 by a team at Carnegie Mellon University led by Luis von Ahn, along with Manuel Blum, Nicholas Hopper, and John Langford. The acronym stands for Completely Automated Public Turing test to tell Computers and Humans Apart. That name alone tells you what they were going for: a practical implementation of Turing’s famous thought experiment, deployed at scale, at no cost per query.

The problem they were solving was real and urgent. Bots were registering thousands of free email accounts on Yahoo and Hotmail to use as spam relays. Online polls were getting gamed by automated scripts. Ticket scalpers were writing programs to buy up concert seats the instant they went on sale. The internet needed a lightweight way to confirm a human was on the other end of a form submission.

Their original approach was elegant in its simplicity: take a word, distort it visually so that OCR software could not read it, and ask the user to type it back. Humans could parse the distorted text through pattern recognition and context. Computers, at that time, could not. The challenge was fully automated to generate and to verify, required no human moderator, and took about three seconds to complete. Von Ahn’s team published the paper, AltaVista deployed it almost immediately to stop bots from adding URLs to its search index, and the CAPTCHA was born.

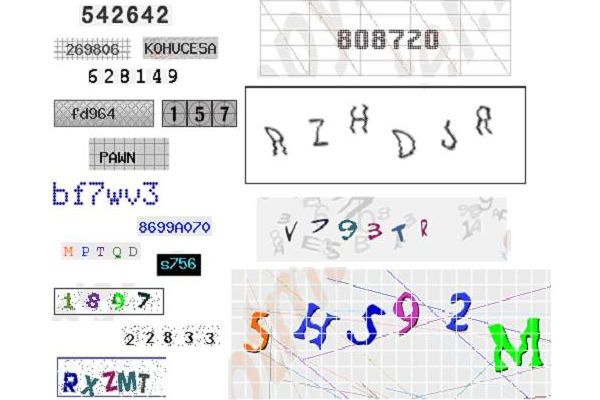

Early and Improved CAPTCHAs

The earliest mass-deployed text CAPTCHA was called EZ-Gimpy, and Yahoo used it across its signup flows for years. EZ-Gimpy drew a single English word from a dictionary, rendered it in a distorted font, and layered a background of random colored pixels as noise. For humans, reading it was mildly annoying. For OCR systems circa 2001, it was genuinely difficult.

That did not last long. By 2002, researchers at UC Berkeley had built an automated solver that cracked EZ-Gimpy with roughly 92% accuracy. The attack worked through segmentation: the solver identified the individual letters by finding gaps in the pixel clusters, then matched each letter against a trained dictionary of character shapes. The background noise was sparse enough that the solver could filter it out. Yahoo’s CAPTCHA, which had seemed robust, was effectively broken within two years of deployment.

The response was predictable. CAPTCHA designers added more noise, more distortion, and more visual complexity. Gimpy-r, a harder variant, scattered multiple words across the image and used heavy background clutter. Scattered text overlapped letters intentionally, making segmentation harder. Some implementations used multiple fonts in the same word. Others varied the arc and rotation of characters so that no two instances looked alike.

The usability cost of these improvements was real. As CAPTCHAs got harder to machine-read, they got harder to human-read too. Studies from that era found error rates for legitimate users climbing above 20% on the most aggressive designs. People with low vision or dyslexia had error rates even higher. The assumption baked into the original design, that humans and computers existed on opposite ends of a visual recognition spectrum, was already starting to show cracks.

Modern CAPTCHAs and reCAPTCHA

By the mid-2000s, CAPTCHA design had evolved considerably. The most effective systems were using angled baselines, variable character spacing, and deliberate overlap between adjacent letters, all of which attacked the segmentation step that automated solvers depended on. If you could not reliably identify where one letter ended and the next began, you could not match individual characters against a training set. The best CAPTCHAs of that period were genuinely resistant to the OCR-based attacks that had cracked EZ-Gimpy.

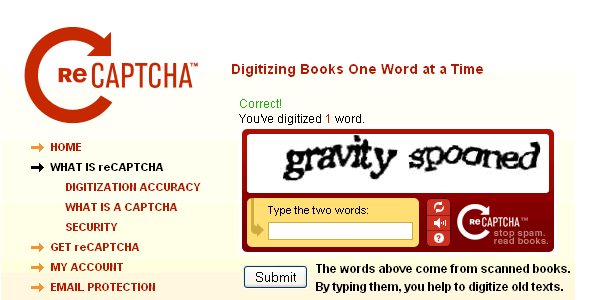

Luis von Ahn, meanwhile, had been thinking about a second use for CAPTCHA-style challenges. He noticed that humans were collectively spending millions of hours per year solving CAPTCHAs, and that this represented an enormous amount of cognitive work that was, from a global perspective, completely wasted. His solution was reCAPTCHA, which he launched in 2007 and sold to Google in 2009.

The idea behind reCAPTCHA was elegant: instead of generating fake distorted words, use scanned text from real books and newspapers that OCR software had already failed to decode. Show the user two words: one with a known answer (used to verify the human) and one from a scanned page that the system was uncertain about. When millions of users solved these challenges, the aggregated answers digitized the uncertain word. Every time someone completed a reCAPTCHA, they were contributing a tiny piece of labor to scanning books for the Internet Archive and the New York Times archives.

After Google acquired reCAPTCHA, the dual-purpose model expanded. Google Street View had millions of address number images that needed to be transcribed to build accurate maps. reCAPTCHA was repurposed to present those address numbers as challenges. Users who thought they were proving their humanity to access a website were simultaneously labeling data that improved Google Maps. This was not a secret, but it was not widely understood either. The population of internet users doing unpaid data labeling for one of the world’s most valuable companies numbered in the billions.

Animated and ASCII CAPTCHAs

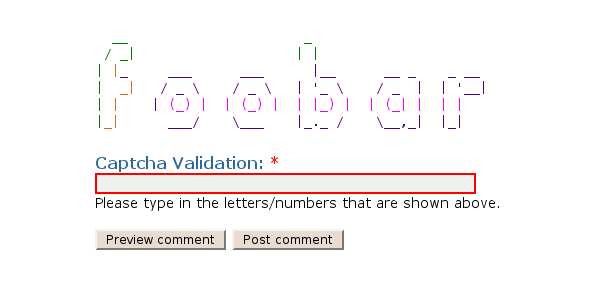

While reCAPTCHA was becoming the dominant standard, a handful of designers were experimenting with entirely different approaches to the human-verification problem. Two of the more creative variants that emerged in the late 2000s were animated GIF CAPTCHAs and ASCII art CAPTCHAs.

Animated CAPTCHAs split a word or number sequence across multiple frames of a GIF. Each frame showed only a portion of the characters, and the human had to mentally assemble the full string by watching the animation cycle through. The theory was that this temporal dimension would be much harder for automated solvers to handle, since most OCR systems operated on static images. In practice, the approach worked for a short window before solvers began capturing individual frames and running analysis on each one independently.

ASCII art CAPTCHAs took a different angle. Instead of rendering characters as pixel images, they built the target letters out of other characters, the way old-school text art was constructed. The idea was that recognizing letterforms built from ASCII symbols would require a different kind of visual processing than standard character recognition. Some implementations used Unicode box-drawing characters or Braille patterns to build the visual structure. These were genuinely creative attempts to stay ahead of solvers, but they also tended to be nearly unreadable on small or low-resolution screens, which limited their practical adoption.

The “I’m Not a Robot” Revolution (reCAPTCHA v2, 2014)

In 2014, Google announced reCAPTCHA v2 with what looked like a joke: a single checkbox that said “I’m not a robot.” No distorted text. No image challenges. Just a checkbox.

The real work happened before you clicked anything. By the time your cursor reached the checkbox, Google had already been analyzing your behavior for several seconds. The way you moved your mouse, whether your trajectory was smooth or jerky, your typing patterns earlier on the page, how long you had been on the site, your browser fingerprint, cookies from other Google properties, your IP address’s history. Humans moved cursors in organic, slightly irregular paths. Bots at that time tended to move in straight lines or perfect arcs, and their timing was too consistent. The checkbox was largely theater: for most legitimate users, the behavioral analysis had already made a confident determination before they ever clicked.

When the behavioral signals were ambiguous, reCAPTCHA v2 fell back to image grid challenges: “Select all images containing traffic lights.” These were harder for bots because they required genuine object recognition, not just character matching. The images were pulled from Google Street View, which meant that solving them also labeled data Google cared about. The dual-purpose model had evolved from text to images.

reCAPTCHA v2 was widely adopted because it dramatically improved user experience for low-risk visitors while maintaining a meaningful barrier. A normal person on a normal network usually just clicked the checkbox and moved on. Bots, running from data center IP addresses with no browsing history, tended to get the image grids.

Invisible reCAPTCHA v3 (2018)

Google’s reCAPTCHA v3, released in 2018, removed the user interaction entirely. There is no checkbox, no image grid, no distorted text. The system runs silently in the background of a page and produces a risk score between 0.0 and 1.0 for every user session. A score near 1.0 suggests a human. A score near 0.0 suggests a bot.

Website owners integrate this score into their own decision logic. A low-risk checkout session might proceed without any friction. A borderline session might trigger an extra verification step, like an email confirmation. A high-risk session might be blocked outright or shown a friction-heavy alternative flow. The threshold and the response are entirely at the developer’s discretion.

This is a genuinely powerful model, but it comes with a significant trade-off: privacy. To score sessions accurately, reCAPTCHA v3 needs to observe behavior across multiple sites that use the service. That means Google is tracking user behavior across a large portion of the web, using CAPTCHA as a cover. European privacy regulators have taken notice. Several French organizations received GDPR enforcement notices related to reCAPTCHA data collection in the early 2020s.

hCaptcha: The Privacy Alternative

hCaptcha launched in 2017 as a direct response to the privacy concerns around Google’s monopoly on bot detection. Built by Intuition Machines, the product positioned itself as a privacy-first alternative that would pay website owners for the human-labeling work their users performed, instead of capturing that value exclusively for Google.

The technical approach was similar to reCAPTCHA: image challenges, behavioral analysis, and a publisher-facing API. But the business model differed in that hCaptcha shared revenue with sites that used it, paying out small amounts per verified human based on the data labeling value generated. For high-traffic sites, this was not trivial money.

Cloudflare switched its entire platform from reCAPTCHA to hCaptcha in April 2020, citing both privacy and vendor risk concerns. Cloudflare serves a massive fraction of web traffic, so this was a significant endorsement. hCaptcha became the default verification layer for a substantial slice of the internet almost overnight. Cloudflare has since built its own solution (more on that below), but hCaptcha remains widely deployed and is particularly common among sites that want to avoid feeding data to Google.

Apple’s Private Access Tokens

Apple introduced Private Access Tokens in 2022 as part of iOS 16 and macOS Ventura, and the concept is worth understanding because it represents a fundamentally different approach to the problem. Rather than asking users to prove their humanity through a challenge, it asks the device to attest that the user is operating a legitimate Apple device with a non-abusive history.

Here is roughly how it works: when a website requests a Private Access Token, the user’s device contacts Apple’s servers. Apple verifies that the device is genuine hardware running real software, and issues a cryptographic token. That token is presented to the website without revealing anything about the user’s identity. The site knows the request came from a real device. The user never sees a CAPTCHA. Apple never learns what site the user visited.

For Apple device users, this effectively eliminates CAPTCHAs on participating sites. Cloudflare was among the first major adopters. The obvious limitation is that it only works for Apple hardware, which excludes Android users, desktop Linux users, and anyone on older hardware. It also centralizes trust in Apple’s infrastructure, which carries its own risks.

Cloudflare Turnstile

Cloudflare launched Turnstile in September 2022, partially motivated by its own frustration with the privacy and user experience compromises of existing CAPTCHA solutions. Turnstile is designed to be non-interactive for the vast majority of users: no challenges, no image grids, no checkbox theater. It runs behavioral analysis in the background and issues a token when it is satisfied the visitor is human.

The “Managed Challenge” mode, which Cloudflare uses across its own platform, sits in between fully invisible and fully interactive. It handles most sessions silently but can escalate to a lightweight challenge when confidence is low. Critically, Turnstile does not use the verified sessions to train data labeling models or track users across sites. Cloudflare’s business model is network infrastructure, not advertising, which removes the incentive to harvest behavioral data.

Turnstile is free to use, works without any Cloudflare account integration, and has seen broad adoption since launch. For smaller websites that previously relied on reCAPTCHA primarily to avoid dealing with spam, it is a straightforward alternative with better privacy characteristics.

The CAPTCHA Farm Industry

Before AI could reliably break CAPTCHAs, there was already a well-established industry for bypassing them using human labor. CAPTCHA farms are services that solve CAPTCHAs on behalf of paying clients, in real time, by routing them to low-wage workers who click the right boxes and type the right characters.

The economics are stark. Services like 2captcha and Anti-Captcha charge clients between $1 and $2 per thousand CAPTCHA solutions. The workers solving those CAPTCHAs, primarily in India, Bangladesh, Venezuela, and the Philippines, earn a fraction of that. A skilled CAPTCHA solver working continuously can complete 300-400 per hour, earning somewhere between $0.30 and $1.50 per hour in the best-case scenario.

The latency is low enough to be practical. Typical turnaround for a submitted CAPTCHA through these services runs between 10 and 30 seconds. For spam campaigns and credential-stuffing attacks operating at scale, this is fast enough. Spam software submits the CAPTCHA image to the farm API, waits for the response, and continues the attack. The entire CAPTCHA security model is circumvented without any AI involvement.

These services operate openly. Their websites have pricing pages, API documentation, and customer support. The legal gray area they occupy, somewhere between legitimate data labeling work and enabling cybercrime, has proven difficult for regulators to address. The workers are doing nothing illegal; the clients frequently are.

AI Solving CAPTCHAs Better Than Humans

The human farm model looked like the ceiling on automated CAPTCHA solving for a long time. Then multimodal AI arrived and changed the calculation completely.

Research published in 2023 showed that GPT-4V could solve reCAPTCHA v2 image challenges with accuracy consistently above 85%, outperforming the human average of roughly 70-80% on the same challenges. The model did not just recognize the objects; it understood the task framing and edge cases well enough to pass the challenges that humans found tricky. Fire hydrants partially obscured by parked cars, traffic lights at odd angles, crosswalks where the paint had worn away.

There is a specific irony here worth sitting with. Google used reCAPTCHA challenges to label Street View images. Those labeled images became training data for Google’s computer vision systems. Those computer vision systems, iterated and improved over years, eventually became components of the AI models that can now solve the challenges. The CAPTCHA was not just defeated by AI. It helped build the AI that defeated it.

Text-based CAPTCHAs had been crackable by specialized tools for years before GPT-4V. But image recognition challenges were supposed to be the fallback that held the line. Once those fell to general-purpose AI systems, the logic of challenge-based verification collapsed. Any challenge humans can solve, AI can now be trained to solve. The only question is how long it takes.

Accessibility Nightmares

Lost in most discussions about the CAPTCHA arms race is a quieter problem that has existed since the beginning: CAPTCHAs are genuinely terrible for a large portion of the population.

For blind users, visual CAPTCHA challenges are completely inaccessible by definition. Audio alternatives have existed since the early 2000s, but they are widely considered unusable in practice. Audio CAPTCHAs typically play a sequence of spoken characters over heavy background noise, intended to defeat audio-processing bots the same way visual noise is meant to defeat OCR. The result is something that many hearing users struggle with and that users who rely on audio due to visual impairment often cannot complete at all. Studies have found audio CAPTCHAs require three to four times as many attempts as visual ones for users who depend on them.

Users with dyslexia or cognitive processing differences face similar barriers with distorted text challenges. Even the click-on-images format is problematic for users with motor disabilities who cannot precisely click small grid cells, and for users with cognitive impairments who process spatial tasks differently.

The Americans with Disabilities Act and the EU Web Accessibility Directive both establish legal obligations for web accessibility, and several organizations have faced legal pressure over CAPTCHA implementations. The National Federation of the Blind has repeatedly flagged CAPTCHA as a systemic barrier. The W3C’s Web Content Accessibility Guidelines note that CAPTCHAs are fundamentally problematic from an accessibility standpoint, not a fringe concern.

The uncomfortable reality is that CAPTCHAs have always imposed a disproportionate burden on users who need the internet most and can navigate it least easily, in exchange for security benefits that have been progressively undermined by the very technology they were meant to stop.

The Post-CAPTCHA Future

Several approaches are converging on a world where CAPTCHAs become unnecessary.

Passkeys, the FIDO2-based authentication standard backed by Apple, Google, and Microsoft, tie authentication to physical hardware and biometrics. A passkey proves not just that you know a password, but that you possess a specific device and can verify your identity on it. Bots cannot easily replicate this without physical access to hardware. Widespread passkey adoption would eliminate a large fraction of the bot problem at the authentication layer, where CAPTCHAs typically live.

Device attestation, as implemented in Apple’s Private Access Tokens and in Android’s Play Integrity API, extends this principle beyond authentication to general web requests. The idea is that a trusted device ecosystem can vouch for requests originating from legitimate users, removing the need for per-request verification. This works well within walled-garden ecosystems and less well at the edges of the web where arbitrary clients connect.

Behavioral biometrics, which analyze typing patterns, mouse dynamics, scrolling behavior, and session timing, offer a continuous passive verification signal rather than a one-time challenge. Companies like BioCatch and NeuroID have built products around this premise. The data is rich enough to distinguish humans from bots with high confidence, and the user never experiences friction. The privacy trade-off is significant: this kind of monitoring requires collecting detailed behavioral data continuously across sessions.

The harder philosophical problem looming beneath all of this is that the distinction between human and bot behavior is narrowing from both directions. Humans increasingly delegate repetitive web tasks to automation. AI agents are beginning to act as genuine users, browsing, purchasing, and submitting forms on behalf of humans who instructed them to do so. The binary question “are you human?” may simply become the wrong question to ask. The more useful question is increasingly “are you authorized, and is this request consistent with expected behavior?” That is a security problem, not a Turing test problem. And the industry, slowly, is starting to treat it as such.

in the last CAPTCHA (mathematical), you’re not proving you’re human, you’re proving you are super human!

It’s a trivial problem: The cosine of pi divided by 2, regardless of sign, is zero (0), so everything inside the brackets evaluates to zero. The partial derivative of the constant inside the brackets (0) evaluates to 0, so the answer, by inspection, is 0. It’s well within the grasp of a high school student who has learned the fundamentals of calculus, and even old geezers like me who took calculus nearly 40 years ago. However, it may confuse you, if you’re a zero.

i like the one with kittens… but it is too big… and not the most esthetic

I like the simple ones, but I understand the need for the most complicated (click on the kitten, etc) type of ones.

There’s a blog I read that asks the square root of negative one before you can comment. Although this is something computers can easily tell you, I like to think that it’s not really there to stop the spam, so much as stop ‘unintelligent’ people (or people who can’t use Google) commenting 🙂

This guy here @yobignol Aus der Mindfactory: Slide2Comment – anti spam the sexy way wrote a nice captcha too!

🙂

Thanks for mention 🙂

Nice Blog

Greetings from Germany!

Long Hoang (the @yobignol guy :D)

There is an error for captchas that are mathematical formulas, only a bot could calculate them, are very difficult

😛

Wow, Great Captcha designs and variety i found over here.

Viagra

Generic Viagra

Kamagra

There, I read a blog that asks the square root of a negative before you can comment. Even if this is something that computers can not easily say, I think that really is not there to stop spam, to stop “stupid” (or people who can not use Google) commented 🙂

Viagra

Generic Viagra

Kamagra

The captchas will go away as soon as Frontier stops intercepting traffic … also tweaked their systems so as not to trigger Google’s “are you human? …

How many homosexual supporters/Christians wouldn’t make an instant judgment and cast …. I had a quick question which I’d like to ask if you don’t mind. …

I just couldn’t leave your website before saying that I really like this article.

Well written, and I see the humour in the last question, may be the site only wants scientists as their visitors 🙂

Bloody Vikings deals with spam from the other end of the table.